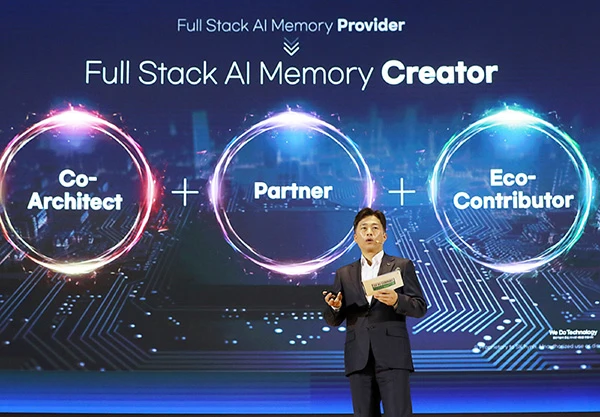

On November 3, at the "SK AI Summit 2025" held in Seoul, South Korea, Kwak Noh-Jung, CEO of SK hynix—a leading South Korean memory chip manufacturer—officially unveiled the company’s new vision of becoming a "Full Stack AI Memory Creator." This initiative aims to deepen SK hynix’s position as a memory leader ahead of the upcoming AI era.

Kwak Noh-Jung pointed out that while the adoption of AI is accelerating, leading to explosive growth in information traffic, the hardware technologies supporting this growth—especially memory performance—have failed to keep pace with advancements in processors. This has created a barrier known as the "Memory Wall." To address this challenge, memory chips are no longer just ordinary components; they are evolving into core value products in the AI industry.

To date, SK hynix has served as a "Full Stack Memory Provider," focusing on timely delivery of products that meet customer needs. However, as the importance of memory grows, SK hynix believes that the role of a mere "provider" is no longer sufficient to meet market demands. Thus, its new goal of becoming a "Full Stack AI Memory Creator" signifies that SK hynix will act as a co-architect, partner, and eco-contributor in the AI computing field. It will exceed customer expectations and work collaboratively within the ecosystem to solve the challenges faced by clients.

To realize this new vision, Kwak Noh-Jung also revealed SK hynix’s latest product lineup, including Custom HBM, AI DRAM (AI-D), and AI NAND (AI-N). This layout will replace the traditional computing-centric approach of memory solutions, shifting toward diversification and expanded memory functions. The goal is to achieve more efficient use of computing resources and structurally resolve AI inference bottlenecks.

Regarding Custom HBM, SK hynix emphasized that the AI market is expanding from commoditization to inference efficiency and optimization. Consequently, HBM is evolving from a traditional product to a customized one. Custom HBM integrates specific functions of GPUs and ASICs into the HBM base to reflect customer needs. This technology maximizes the performance of GPUs and ASICs while reducing data transmission power consumption through HBM, thereby significantly improving system efficiency.

In response to market demands, SK hynix has further segmented AI DRAM (AI-D) to provide memory solutions best suited for the needs of each field:

1. AI-D O (Optimization): A low-power, high-performance DRAM designed to reduce Total Cost of Ownership (TCO) and improve operational efficiency. This solution includes MRDIMM (Multiplexed Rank DIMM), SOCAMM (Small Form Factor Compressed Attached Memory Module—a low-power DRAM module for AI servers), and LPDDR5R (low-voltage DRAM with Reliability, Availability, Serviceability (RAS) features for mobile products).

2. AI-D B (Breakthrough): Solutions aimed at overcoming the "Memory Wall," featuring ultra-high-capacity memory and flexible memory allocation. This category includes CMM (Compute eXpress Link Memory Module—a next-generation interface for efficiently connecting CPUs, GPUs, memory, and other components) and PIM (Processing-In-Memory—a next-generation technology that integrates computing capabilities into memory to address data movement bottlenecks in AI and big data processing).

3. AI-D E (Expansion): Designed to expand DRAM use cases beyond data centers to fields such as robotics, mobility, and industrial automation. This solution includes HBM.

Beyond DRAM, SK hynix is also developing three next-generation storage solutions for AI NAND (AI-N):

1. AI-N P (Performance): Focused on ultra-high performance, this solution aims to efficiently process large volumes of data generated by large-scale AI inference tasks. By minimizing bottlenecks between storage and AI operations, it significantly improves processing speed and energy efficiency. SK hynix plans to design NAND and controllers with new architectures and target sample release by the end of 2026.

2. AI-N B (Bandwidth): Expands bandwidth through vertical stacking of semiconductor dies, serving as a solution to compensate for limitations in HBM capacity growth. The key lies in combining HBM’s stacking structure with high-density, cost-effective NAND Flash.

3. AI-N D (Density): Enhances cost competitiveness by achieving ultra-high capacity. This high-density solution is suitable for storing large amounts of AI data with low power consumption and low cost. SK hynix aims to increase storage density from the terabyte (TB) level of current QLC-based Solid-State Drives (SSDs) to the petabyte (PB) level, and develop a mid-tier storage solution that combines the speed of SSDs with the cost-effectiveness of HDDs.

Kwak Noh-Jung emphasized that in the AI era, companies that create stronger synergies and superior products through collaboration with customers and partners are expected to succeed. As part of its cooperation with global leaders, SK hynix not only partners with NVIDIA on HBM but also uses NVIDIA Omniverse to enhance fab productivity through "fab digital twins" technology. It also maintains long-term cooperation with OpenAI to supply high-performance memory. Additionally, SK hynix is working closely with TSMC on logic process base dies required for next-generation HBM.

Furthermore, in terms of storage technology, SK hynix is collaborating with SanDisk to jointly develop global standards for HBF (High-Bandwidth Flash). It is also partnering with NAVER Cloud to optimize next-generation AI memory and storage products for real-world data center environments. Looking ahead, SK hynix will continue to prioritize customer satisfaction, work with partners to overcome limitations, and pioneer the future.

(Reprinted from https://news.eccn.com/)