In response to the widely circulated online rumor that "Alibaba Cloud purchased 150,000 GPUs from Cambricon", Alibaba Cloud officially issued a statement, clarifying that it has always adhered to the "one cloud, multiple chips" strategy and actively supported the development of the domestic supply chain. However, the so-called news of purchasing 150,000 Cambricon GPUs is completely untrue.

Previously, some media reported that Alibaba urgently increased its order for Cambricon Siyuan 370 to 150,000 units. If the order were true, it would have marked a major breakthrough for domestic GPUs in the cloud computing field. The Siyuan 370, developed by Cambricon based on 7nm process technology, boasts an INT8 computing power of 256 TOPS (a 100% increase compared to the previous generation) and supports an integrated training-inference architecture.

Nevertheless, Alibaba Cloud’s denial reveals the in-depth contradiction in the current replacement of domestic chips: a significant gap still exists between technological breakthroughs and commercialization. From the perspective of technical compatibility, Cambricon’s Siyuan 370 has been verified for feasibility in some inference scenarios of Alibaba Cloud, but the training phase still relies on high-end chips such as NVIDIA H100. Although Cambricon’s latest Siyuan 590 chip has performance reaching 80% of NVIDIA H100, gaps remain in aspects like FP8 mixed-precision computing and software ecosystem adaptation.

This rumor refutation reflects market anxiety amid the tight AI computing power supply chain. As the U.S. tightens export controls on high-end AI chips to China, domestic chip manufacturers such as Cambricon and Huawei Ascend have become the focus. However, current domestic chips still lag behind the international leading level in terms of performance and software ecosystem, requiring a rational view of the replacement process.

It is reported that Alibaba has long been one of NVIDIA’s largest customers. With changes in the industry landscape, Alibaba is developing a new artificial intelligence chip, which has now entered the testing phase. The chip is mainly designed for a wider range of AI inference tasks and is compatible with NVIDIA’s architecture. Notably, the new chip will no longer be contracted to TSMC for manufacturing; instead, it will be produced by a domestic enterprise.

Alibaba has launched a variety of self-developed AI chips and large AI models. Recently, its semiconductor subsidiary “平头哥 (T-Head)” released the newly self-developed AI chip HanGuang 800, which adopts a 12nm process and is specially optimized for cloud-based AI training and inference scenarios. It can be widely applied in service scenarios such as e-commerce recommendation, intelligent logistics, and cloud computing. The HanGuang 800 has begun large-scale deployment in Alibaba Cloud data centers, enabling seamless integration with existing AI ecosystems and significantly reducing user migration costs. Zhang Jianfeng, CTO of Alibaba, stated that "the launch of HanGuang 800 is an important milestone in Alibaba Cloud’s 'Chip-Cloud Integration' strategy."

Benefiting from the rapid development of artificial intelligence applications, the proportion of revenue from Alibaba Cloud’s AI-related products among external customers has continued to rise. In the earnings statement, Wu Yongming, CEO of Alibaba, pointed out: "Driven by strong AI demand, Alibaba Cloud Intelligence Group has achieved accelerated revenue growth, and revenue from AI-related products has become an important part of external customer revenue." According to the financial report for the first fiscal quarter ending June 2025, the cloud computing business was the biggest highlight of this quarter’s earnings: its revenue reached RMB 33.4 billion, a year-on-year increase of 26% (markedly higher than the 18% growth rate in the previous quarter); the EBIT (Earnings Before Interest and Taxes) of the cloud computing segment increased by 26% year-on-year, with profitability improving simultaneously.

The rapid growth of Alibaba’s cloud computing business is attributed to its heavy investment in AI and cloud infrastructure. On August 29, Alibaba issued an announcement disclosing its latest investment in the fields of artificial intelligence and cloud infrastructure: in the past quarter, Alibaba’s capital expenditure in this field reached as high as RMB 38.6 billion; over the past four quarters, the cumulative investment in AI infrastructure and AI product R&D has exceeded RMB 100 billion, demonstrating the company’s firm commitment to the AI and cloud services sectors.

Data from the China Artificial Intelligence Industry Development Alliance shows that the scale of China’s AI chip market reached the 100-billion-yuan level in 2023, but the localization rate was less than 40%. "Self-developed AI chips are the key to building an independent and controllable AI infrastructure," experts from the China Semiconductor Industry Association stated. Currently, a number of Chinese enterprises, including Huawei Ascend and Cambricon, are actively deploying in the AI chip field, forming diversified domestic replacement solutions.

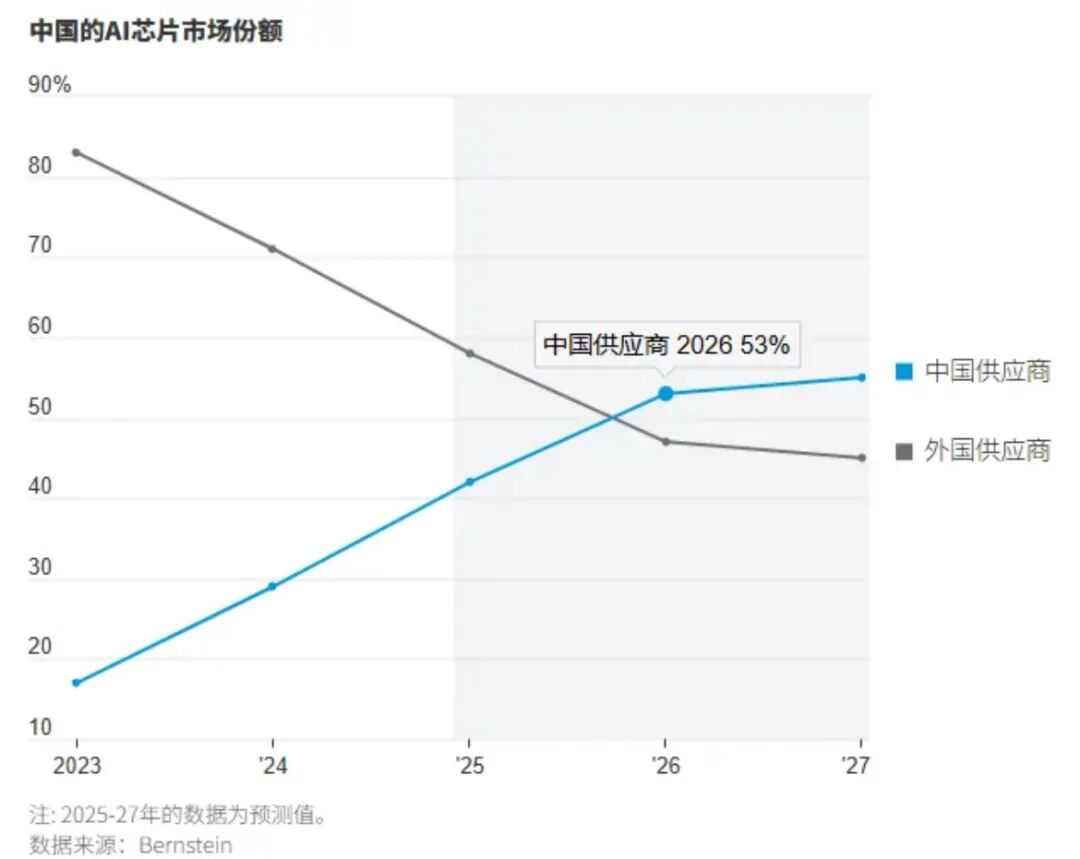

With the rapid development of large model technology, China’s demand for AI computing power grows by more than 200% annually. Data from Bernstein Research shows that by 2027, the market share of domestic suppliers of AI chips in China is expected to reach 55%, while that of foreign suppliers will drop to 45%.

(Reprinted from https://www.eepw.com.cn/)